In the same way, neither constructing an aligned spin system nor checking its alignment are "free".Īs another example, consider the Szilard engine, which allows us to "extract" energy "from" information :

To go back to the example of the deck of cards, the deck would have needed to be prepared beforehand to be "ordered", and the action of "looking" at it and to check in what order each card is in is not "free" either. "Looking" (aka "measurement" or "observation") is not a "free" operation. The conservation of Gibbs free energy does not suddenly break down because we stop (or start) looking. Entropy is a thermodynamical property of a bit of stuff, regardless of what we know about it. > Entropy itself is whatever it is even before we bother calculating it, and we can estimate it different ways. Knowing a lot about a system is equivalent to having low entropy about it. This is why having two different terms "entropy" and "lack of information" that correspond to the same underlying physical concept is problematic : it causes this kind of confusion. Knowing something does not change the state of the system you are observing. Basically, your interpretation is that "we know a lot about the system, therefore entropy is low", whilst in a physical system it's the other way around: "entropy is low, therefore we know a lot about it". > There is indeed some overlap (I have personally worked for a couple of years on applying information theory to calculate entropy in glass-forming materials), but not enough that you can just apply random concepts from one field to the other. Obviously, a deck of cards can be in a lot of different microstates, I'm not sure what do you mean ? Consider that "macrostate" is another name for "distribution" ? If you define a macrostate in such a way as it can have only one microstate, then yes, entropy is 0. Sure, we can use Shannon's formula in some cases, which is really just Boltzmann's formula with different units.Įntropy is a thermodynamical property of a bit of stuff, regardless of what we know about it. Entropy itself is whatever it is even before we bother calculating it, and we can estimate it different ways. > EDIT2 : A specific example where both the Thermodynamic entropy and the Shannon entropy can be experimentally seen to be equivalent :Įntropy goes to zero when spins align, but it does not mean that entropy is non-zero until we check that the spins are aligned. There is indeed some overlap (I have personally worked for a couple of years on applying information theory to calculate entropy in glass-forming materials), but not enough that you can just apply random concepts from one field to the other. However, there is tremendous overlap between the two camps. > Very roughly speaking, the items higher on the list can be assigned to the “information theory” camp, while the items lower on the list can be assigned to the “thermodynamics” camp. I am not impressed with that website in general, but in this instance I don't see anything wrong with that section.

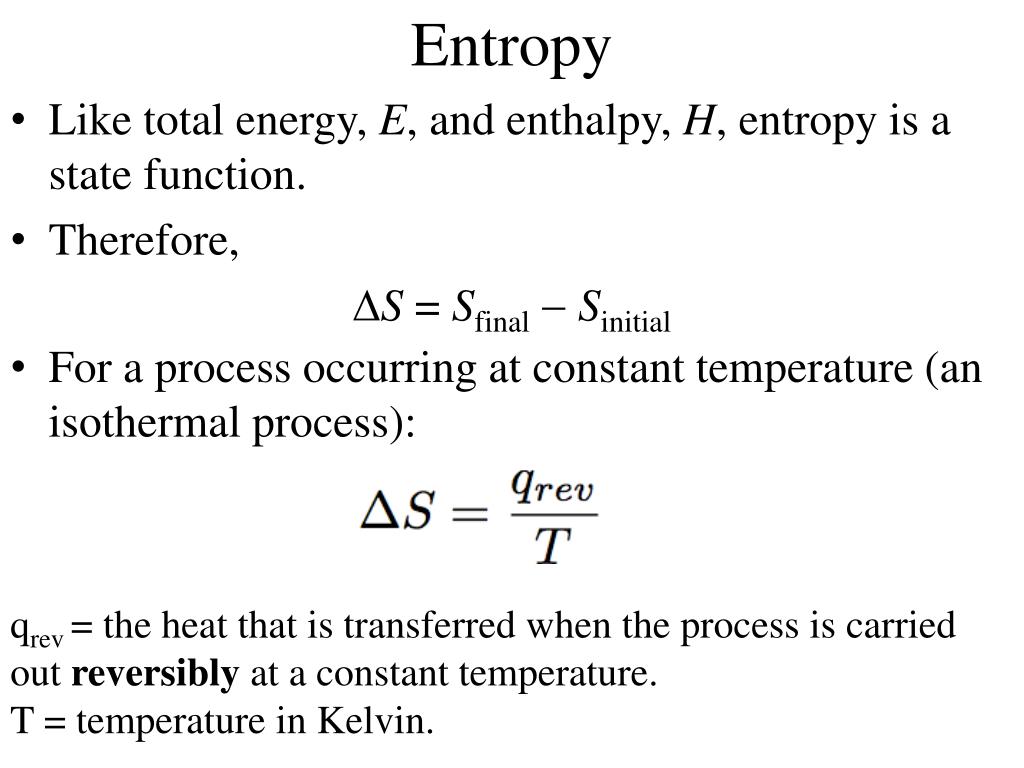

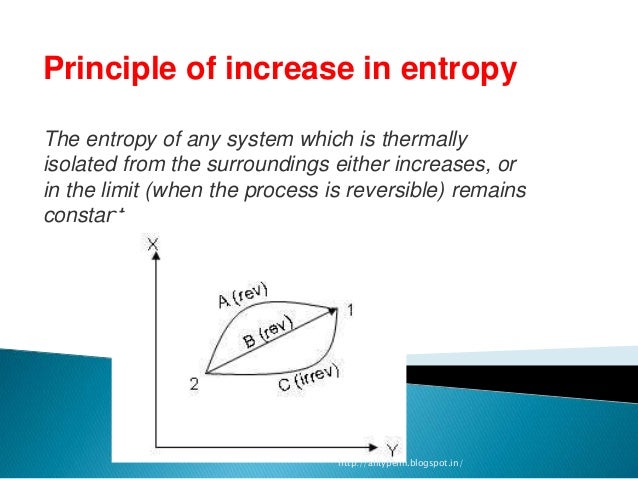

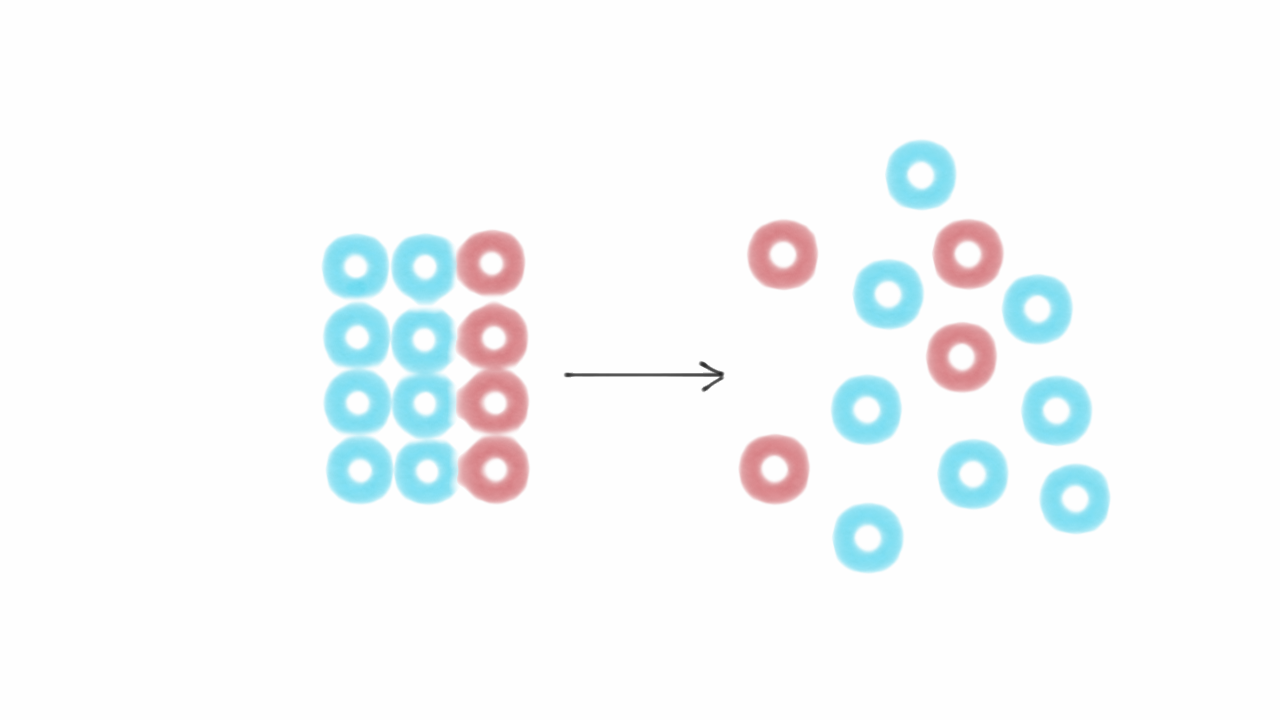

There are lots of caveats, asterisks, and cases that look like exceptions that’s a quick and dirty description. It is impossible to measure an absolute entropy in general (in the same way as it is impossible to measure an absolute energy in general). What we can do usually is measure how much something heats when we give it energy, and work from there to deduce any entropy change using equations from thermodynamics or statistical Physics. In non-isolated systems, anything goes and entropy can go up or down locally all the time. they cannot exchange energy with anything else), entropy cannot decrease on average. Ordered states (like crystals) have lower entropies than disordered states (like liquids), because there are fewer ways of arranging atoms and still get a crystal compared to the combination of possible positions for each atom in a liquid. Entropy is how many of such states are possible. In a physical system it is related to how many arrangements of atoms/molecules/things there can be that would result in the system being in the state you can see.Įnergy tells you how difficult it is to put a bunch of particles (atoms, for example) in a given state.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed